Key Takeaways

- Nielsen’s 10 usability heuristics give you a clear checklist to uncover UX issues in Dovetail’s AI tagging, playback, and collaboration features.

- Common problems include missing AI processing feedback, complex permission settings, and weak undo options, as reported in G2 and Capterra reviews.

- Run evaluations with 3 evaluators over 2 to 4 hours, use a 0 to 4 severity scale, and prioritize high-impact quick wins that lift adoption.

- SaaSHero adds 7 CRO principles such as Clarity and Friction Reduction, which have delivered 650% ROI through revenue-focused UX fixes.

- Partner with SaaSHero for a professional Dovetail heuristic audit to turn UX improvements into measurable growth.

Setup Checklist for Your Dovetail Evaluation

Gather a 14-day Dovetail trial account, screenshot tools, and a working knowledge of Nielsen’s original 10 usability heuristics. Focus on Projects, video clips, AI tags and agents, collaboration boards, and session playback interfaces. Block 2 to 4 hours and involve 3 evaluators to reduce individual bias, which matches standard SaaSHero practice. Follow a 4-step framework: prepare with templates, audit each interface, score and prioritize issues, then apply SaaSHero’s revenue-focused CRO fixes.

Framework: Heuristics Plus Revenue-Focused CRO

Use Nielsen’s 10 heuristics as the backbone of your Dovetail UX review. Cover Visibility of System Status, Match Between System and Real World, User Control and Freedom, Consistency and Standards, Error Prevention, Recognition Rather Than Recall, Flexibility and Efficiency of Use, Aesthetic and Minimalist Design, Help Users Recognize and Recover from Errors, and Help and Documentation. Move through preparation, audit execution, analysis, and SaaSHero’s CRO implementation in sequence. Extend the review with SaaSHero’s 7 conversion principles such as Relevance, Clarity, Trust, and Friction reduction, which directly support revenue growth. Get your free Dovetail evaluation template to start quickly.

Step 1: Check Visibility of System Status in Dovetail

Confirm that Dovetail gives clear feedback during AI agent processing, video uploads, and tagging actions. Users report slow loading times for large project datasets that hurt real-time collaboration. Note missing progress indicators during AI transcription, absent loading states for dashboard generation, and unclear status when agents process feedback. Build a violation table with columns for Issue, Severity (0 to 4), and SaaSHero Fix. For example, “AI agent processing lacks progress feedback” earns Severity 3, and SaaSHero recommends micro-animations plus estimated completion times, similar to our PetDesk CRO work. Test mobile responsiveness for status indicators and apply SaaSHero’s Clarity principle with 5-second comprehension tests.

Step 2: Align Dovetail Terminology with Real-World Workflows

Confirm that Dovetail uses language that matches SaaS insights workflows instead of niche jargon. The January 2026 release of Agents and Dashboards introduced concepts that may feel unfamiliar to non-technical users. Compare labels such as “boards” and “folders,” review AI dashboard names, and inspect collaboration permission language. Record issues such as unclear agent terminology or dashboard navigation that conflicts with user mental models. Apply SaaSHero’s Relevance principle so terminology matches user expectations, reduces cognitive load, and supports faster adoption.

Step 3: Strengthen User Control and Freedom in Dovetail

Confirm that users can easily undo actions in clip editing, AI tagging, and board organization. Expert reviews highlight rigid filtering options that limit flexibility and efficiency. Test whether users can reverse AI tag applications, restore deleted clips, and exit complex workflows without losing work. Treat missing undo in collaboration boards or irreversible AI agent actions as critical violations. Apply SaaSHero’s Friction reduction principle with clear escape routes and safety nets, similar to our Intercom case alignment. Review SaaSHero’s CRO audit examples for implementation ideas.

Step 4: Enforce Consistency and Standards Across Dovetail

Check for consistent design patterns across projects, playback interfaces, and dashboards. Look for mismatched icons between tagging and filtering, different button styles across project views, or conflicting navigation between AI agents and manual workflows. UX experts report dashboard inconsistencies that push users toward exports for stakeholder reporting. Capture cases where similar functions use different visuals or interactions. Apply SaaSHero’s Trust signals principle so consistent design builds confidence and shortens learning curves.

Step 5: Prevent Errors and Protect Research Data

Review safeguards against data loss during tagging imports, accidental deletions, and AI processing failures. Test bulk tag operations, folder reorganization, and integration syncing that might cause irreversible changes. Users report that AI accuracy still needs improvement, so strong error prevention protects data integrity. Flag missing confirmation dialogs, weak destructive action warnings, and limited backup options. Record any scenario where users can overwrite research data or lose collaborative work without protection.

Step 6: Favor Recognition Over Recall in Dovetail Interfaces

Confirm that session replay controls, tagging tools, and navigation rely on visual cues instead of memory. Experts praise Dovetail’s clean interface for replaying sessions without memorizing controls. Still, check whether clip navigation depends on remembered timestamps, whether AI agent functions have clear labels, and whether collaboration features show enough visual context. Record hidden features, ambiguous icons, and workflows that require users to remember previous steps. Apply SaaSHero’s Clarity principle so visual design communicates function directly.

Step 7: Support Flexibility and Efficiency for Power Users

Review shortcuts and customization options for teams handling large datasets or complex research programs. Reviews call out rigid filtering options that slow advanced users. Test whether AI agents can be tuned for specific research methods, whether bulk operations exist, and whether keyboard shortcuts support frequent actions. Capture gaps in dashboard customization, inflexible tagging flows, and missing batch tools that force repetitive work.

Step 8: Simplify Aesthetic and Minimalist Design in Dovetail

Scan dashboards, collaboration workspaces, and AI agent screens for visual clutter. Recent reviews mention complexity in the tagging system, which suggests possible design overload. Check whether permission settings add noise, whether dashboard widgets compete for attention, and whether AI agent controls distract from core tasks. Note decorative elements, redundant data, and competing visual hierarchies that slow task completion. Apply SaaSHero’s Relevance principle so every element supports a user goal.

Step 9: Improve Error Recognition and Recovery in Dovetail

Review error messages for integration failures, AI processing issues, and collaboration conflicts. Test Zendesk sync errors, failed video uploads, and permission clashes that trigger user-facing errors. SaaS audit examples show that jargon-heavy error messages break usability heuristics. Record whether messages explain the issue, list clear recovery steps, and offer alternatives. Treat vague technical errors, missing troubleshooting guidance, and dead-end error states as serious violations.

Step 10: Evaluate Help and Documentation for Dovetail Teams

Review onboarding and help content for non-UX team members who use Dovetail. Intercom’s case study notes early onboarding friction for non-UX specialists, later reduced by 2025 tutorials. Confirm that help covers AI agent setup, collaboration permissions, and integrations. Test help search and confirm coverage for common troubleshooting paths. Capture gaps in contextual help, missing tutorials for 2026 features, and limited guidance for complex workflows that block adoption.

Measurement and Validation of Dovetail Findings

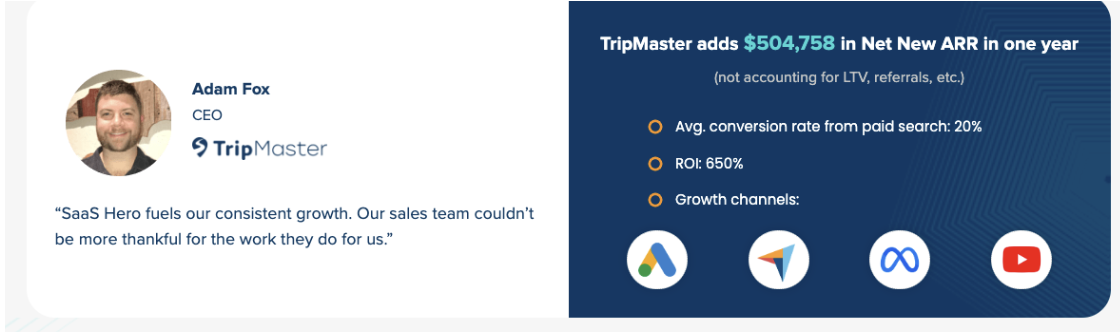

Plan to uncover at least 20 actionable findings, with about 80% classified as quick wins that need light development effort. Marketing SaaS onboarding fixes increased completion rates from 55% to 78% after applying heuristic recommendations. Use a 0 to 4 severity scale and a prioritization matrix so you address high-impact, low-effort items first. SaaSHero’s TripMaster case study shows 650% ROI from systematic UX work, with 20% conversion lifts common when teams execute fixes properly. Validate findings through agreement across evaluators and connect each recommendation to metrics such as activation, feature adoption, and support ticket volume.

SaaSHero’s CRO Extensions and Dovetail Template

SaaSHero expands basic heuristic reviews with 7 conversion principles that include Relevance, Clarity, Trust, and Friction reduction. Research shows every dollar invested in UX can return $100, or 9,900% ROI, so professional evaluation has direct financial impact. Our template includes columns for Heuristic, Interface Element, Violation Description, Severity Score, Recommended Fix, and Implementation Priority. This structure has generated $504k ARR for clients through targeted UX improvements. Download our free Dovetail evaluation template to start your assessment now.

Summary and SaaSHero Recommendation for Dovetail

Follow a 4-step checklist: prepare materials and team, audit each interface against all 10 heuristics, score and prioritize by business impact, then implement quick wins first. Expect top Dovetail issues around missing AI processing feedback, complex permission workflows, and weak error recovery. SaaS UX improvements can cut time-to-value by 40% and raise activation by 25%. Work with SaaSHero for expert execution through flat $1,250+ monthly retainers, month-to-month terms, and senior-led delivery. Heuristic evaluation remains more cost-effective than user sample research while offering similar insight depth. Avoid vanity-metric agencies and choose SaaSHero as your revenue-first partner. Book a discovery call to turn your Dovetail experience into a conversion engine.

Frequently Asked Questions

How long does a comprehensive Dovetail heuristic evaluation take?

A thorough Dovetail heuristic evaluation usually takes 2 to 4 hours with a team of 3 evaluators. This window covers review of key interfaces such as projects, tagging, AI agents, collaboration boards, and playback. It also includes documentation of violations, severity scoring, and prioritization of fixes. Time varies by account complexity and feature depth, but this range supports insights that can drive 20 to 40% gains in adoption and workflow efficiency.

What are the 10 heuristics of UX and how do they apply to SaaS tools like Dovetail?

Nielsen’s 10 usability heuristics give you a structured way to review SaaS interfaces. They cover Visibility of System Status, Match Between System and Real World, User Control and Freedom, Consistency and Standards, Error Prevention, Recognition Rather Than Recall, Flexibility and Efficiency of Use, Aesthetic and Minimalist Design, Help Users Recognize and Recover from Errors, and Help and Documentation. In Dovetail, these principles address complex research workflows and collaboration needs, including AI processing feedback, intuitive terminology, undo options, consistent patterns, and strong help content.

Do you provide a heuristic evaluation template specifically for Dovetail?

SaaSHero offers heuristic evaluation templates tailored for SaaS tools like Dovetail. Columns include Heuristic Category, Interface Element, Violation Description, Severity Score from 0 to 4, Recommended Fix, Implementation Priority, and Business Impact. The template covers AI agents, tagging workflows, collaboration boards, and integrations. This structure supports consistent evaluations and outputs that product and engineering teams can act on immediately.

What is the difference between SaaSHero’s professional heuristic evaluation and DIY approaches?

SaaSHero’s professional heuristic evaluations deliver about 650% ROI by combining B2B SaaS expertise, a repeatable method, and revenue-focused recommendations. Our senior team understands SaaS workflows, conversion levers, and measurement, which DIY efforts often miss. We connect heuristic findings with CRO principles, competitor analysis, and implementation roadmaps that turn insights into growth. DIY reviews usually surface obvious issues but lack strategic context and execution depth.

How do the 2026 Dovetail updates with AI agents and dashboards affect heuristic evaluation?

The January 2026 launch of AI Agents and Dashboards adds new areas to evaluate, such as automation feedback, AI accuracy messaging, and dashboard usability. These features introduce new interaction patterns around autonomous assistants, custom visualizations, and cross-platform integrations. Our method reviews AI transparency, automation control, dashboard clarity, and integration reliability so these features support rather than complicate workflows. The added complexity increases the value of professional evaluation.

What are the risks of conducting heuristic evaluation without professional expertise?

Heuristic evaluations without expert support risk missing high-impact issues, misjudging severity, and shipping fixes that do not support conversion goals. Inexperienced reviewers often focus on visual polish and ignore workflow problems that drive churn. Without a solid method and bias controls, DIY reviews can confirm existing beliefs instead of uncovering real barriers. Modern SaaS tools like Dovetail require knowledge of research workflows, collaboration patterns, and technical limits that generalist teams rarely hold.

How frequently should Dovetail heuristic evaluations be conducted?

Run Dovetail heuristic evaluations every quarter to match platform updates, new features, and changing user needs. The pace of releases, including AI agents and dashboards, makes regular reviews essential for a strong user experience. Add evaluations after major onboarding waves, workflow changes, or new integrations to keep performance high. This cadence supports proactive improvements and sustained gains in adoption and productivity. Book a discovery call to plan your ongoing evaluation schedule.