Key Takeaways

- Forty percent of SaaS churn comes from poor UX. Remote heuristic analysis using a 7-step framework addresses B2B complexities like multi-user workflows and permissions.

- Run evaluations with three independent reviewers, including a PM, UX designer, and developer. Focus on high-frequency tasks such as onboarding and dashboards, using tools like Loom and Miro.

- Apply SaaSHero’s seven principles, including relevance, clarity, trust, and friction, with severity scoring to rank fixes by revenue impact.

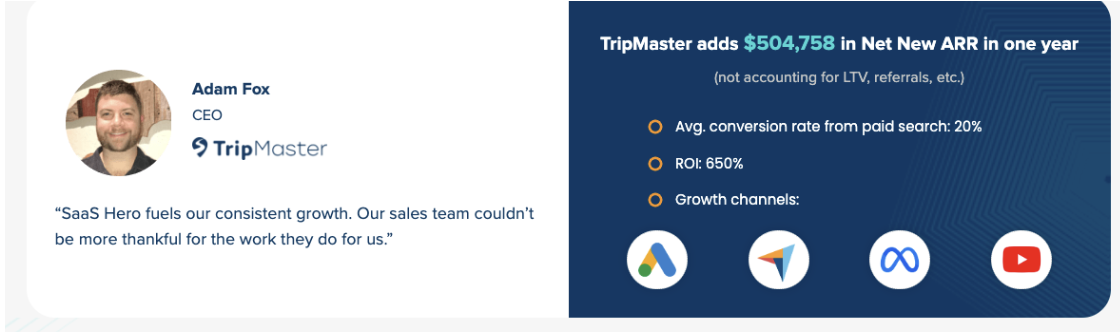

- Validate findings with GA4 analytics and support data. Clients often see 20% conversion lifts and more than $504K in Net New ARR through systematic implementation.

- Teams ready to improve B2B SaaS UX can book a discovery call with SaaSHero for expert-led audits and faster growth.

Tools and Context You Need Before a Remote UX Review

Successful remote heuristic evaluation starts with the right tools and shared expectations. Teams rely on Loom for screen recordings, Miro or Notion for collaborative documentation, Figma for mockups, and Google Analytics 4 or HubSpot for baseline metrics. Allocate 4-8 hours for a full evaluation and align everyone that heuristic analysis delivers qualitative insights that work best alongside quantitative A/B testing.

B2B SaaS products introduce challenges that differ from consumer apps. Multi-user workflows, complex permission structures, and progressive disclosure in dashboards require tailored evaluation methods. This methodology builds on Nielsen’s foundational usability heuristics and extends them to SaaS-specific journeys from first signup through advanced feature adoption.

SaaSHero’s 7-Principle Framework for B2B SaaS UX

SaaSHero uses a framework that adapts traditional heuristics to B2B SaaS through principles such as relevance, clarity, trust, and friction. Each principle targets a specific SaaS UX challenge and includes clear criteria for severity assessment.

| Principle | SaaS Example | Severity Check | Action Priority |

|---|---|---|---|

| Relevance | Ad-to-dashboard match | High if churn | Immediate fix |

| Clarity | Five-second value prop in onboarding | Medium if confusion | Quick win |

| Trust | Role-based permissions visible | High if support spikes | Immediate fix |

| Friction | Minimum clicks in workflows | High if drop-off | Quick win |

The evaluation process follows a clear flow. Teams define scope, run independent evaluations, score severity, then prioritize fixes together. This structure keeps coverage broad while attention stays on issues that affect revenue.

7 Steps to Run a Remote Heuristic Evaluation for B2B SaaS

Step 1: Define Scope and High-Frequency Tasks

Start by focusing on user journeys that affect conversion and retention. Prioritize dashboard navigation, onboarding flows, core feature workflows, and permission management interfaces. Document the specific tasks users perform most often, such as creating reports, managing team access, or configuring integrations.

Step 2: Assemble a Remote Evaluation Team

Form a group of three independent evaluators with distinct viewpoints. Include a product manager who understands user needs, a UX designer who knows interface principles, and a developer who understands technical constraints. Remote work requires clear communication rules and shared documentation standards so findings stay consistent.

Step 3: Run Independent UX Reviews

Each evaluator completes an individual assessment and records their screen with Loom to capture thought processes. Attention stays on B2B SaaS elements such as permission hierarchies, multi-tenant data visibility, and workflow handoffs between roles. Evaluators work separately during this step to avoid groupthink and preserve independent insights.

Step 4: Use a Severity Scoring Matrix

Severity rating scales categorize findings based on impact and frequency so teams can prioritize fixes effectively.

| Severity | Impact/Frequency | Action Required | Timeline |

|---|---|---|---|

| 4-Catastrophic | High/High | Fix immediately | This sprint |

| 3-Major | High/Medium | Quick win priority | Next sprint |

| 2-Minor | Low/Medium | Roadmap item | Next quarter |

| 1-Cosmetic | Low/Low | Future consideration | Backlog |

Step 5: Combine Findings in a Shared Workspace

Use Miro or a similar tool to bring individual evaluations together in one place. Cluster findings into themes like navigation, terminology, feedback, permissions, and forms to reveal broader UX patterns. Include mobile experience in the review, because B2B users increasingly access SaaS tools from phones and tablets.

Step 6: Rank Issues by ROI Potential

Order issues by likely business impact, with attention on quick wins that teams can ship quickly. Consider development effort, user frequency, and revenue correlation for each issue. Address high-severity, low-effort problems first so the team builds momentum and confidence.

Step 7: Validate Findings with Analytics

Compare heuristic findings with Google Analytics 4 data, support ticket volumes, and user feedback. Look for clear links between identified issues and measurable behavior patterns such as drop-offs, error events, or repeated support themes.

Turn these insights into revenue growth. Book a discovery call to see how SaaSHero’s CRO expertise can speed up your UX improvements.

Real-World Example: Remote Heuristic Analysis for a B2B Dashboard

SaaSHero’s work with clients such as TripMaster and innQuest shows how structured heuristic evaluation and CRO drive revenue. The TripMaster case study produced $504,758 in Net New ARR and 650% ROI through paid search, paid social, and focused CRO that resolved clarity and friction issues.

| Issue Identified | Heuristic Violated | Solution Implemented | Measured Impact |

|---|---|---|---|

| Dashboard information overload | Clarity | Progressive disclosure design | 20% conversion lift |

| Permission error messages | Trust | Role-based UI visibility | 30% reduction in support tickets |

| Workflow navigation confusion | Friction | Streamlined task flows | 15% faster task completion |

This structured approach generated $504,758 in Net New ARR and delivered 650% ROI by removing usability barriers that blocked adoption and increased churn. SaaSHero’s innQuest CRO audit further illustrates how their heuristic analysis process works in practice.

Measuring UX Gains and Using the B2B SaaS Heuristics Template

Effective measurement focuses on metrics that connect directly to heuristic improvements. Track conversion funnel drop-off rates, support ticket volume by category, user task completion times, and feature adoption rates through Google Analytics 4 events and CRM data.

Key validation metrics include:

- Dashboard engagement time and bounce rates

- Onboarding completion percentages

- Feature discovery and adoption rates

- Support ticket categorization and volume trends

- User satisfaction scores from in-app feedback

This framework combines a seven-principle model with severity scoring matrices, evaluation forms, and prioritization worksheets. The template includes B2B SaaS-specific criteria for permissions, multi-tenant interfaces, and complex workflow reviews.

Teams can access the full evaluation framework and speed up their UX work. Book a discovery call to receive guidance on rollout and adoption.

Advanced UX Variations and When to Partner with SaaSHero

Advanced teams extend remote heuristic analysis with competitive benchmarking, A/B testing validation, and AI-assisted evaluation tools that reach 95% accuracy compared to human experts. Automated tools still need human oversight for B2B SaaS context, domain rules, and business logic.

SaaSHero provides a comprehensive approach to B2B SaaS growth by combining heuristic analysis, conversion rate optimization, and ongoing performance monitoring. Their month-to-month engagement model starting at $1,250 gives teams senior-led expertise without long contracts and has delivered outcomes such as TestGorilla’s 80-day payback period.

Teams ready to scale beyond DIY evaluation can book a discovery call and explore how SaaSHero’s methodology can drive measurable ARR growth for their B2B SaaS product.

Summary of the Framework and Your Next Moves

Remote heuristic analysis gives B2B SaaS teams a repeatable way to find and rank UX improvements that affect revenue. The seven-step process supports full evaluations in 4-8 hours and keeps attention on SaaS-specific challenges such as permissions, workflows, and progressive disclosure.

Success depends on the right tools, independent evaluation, severity-based prioritization, and analytics validation. Teams often see conversion lifts around 20% when they implement findings consistently, with the largest gains coming from clarity and friction fixes in core workflows.

Next steps include running an initial evaluation with this framework, shipping quick wins first, and setting quarterly evaluation cycles to maintain progress. Teams that want expert support or faster outcomes can partner with a specialized agency such as SaaSHero for senior-level guidance and proven playbooks.

Frequently Asked Questions

How long does it take to see results from remote heuristic analysis?

Most B2B SaaS teams complete the first evaluation in 4-8 hours and ship quick wins within one or two sprints. Measurable improvements usually appear within 30-60 days, and conversion lifts of 15-20% are common when teams fix high-severity clarity and friction issues. The strongest gains come from improvements in high-frequency user workflows.

What team roles should be involved in the evaluation process?

Ideal teams include three independent evaluators. A product manager covers user needs and business goals, a UX designer focuses on interface principles and user psychology, and a developer evaluates technical feasibility and complexity. Together they cover usability, business impact, and engineering constraints.

How should small SaaS teams adapt this methodology?

Small teams can narrow scope to core flows that affect conversion and retention, such as onboarding, primary dashboard workflows, and key feature adoption paths. Limit the review to two or three critical journeys instead of the full product. Involve customer success teammates who speak with users often and understand recurring pain points.

What are the main risks of remote heuristic evaluation?

The main risk is evaluator bias, where personal views hide important issues or overemphasize preferences. Reduce this risk by using at least three independent evaluators, applying objective criteria, and validating findings with analytics and support data. Avoid product changes based on feedback from a single evaluator.

How frequently should B2B SaaS teams conduct heuristic evaluations?

Quarterly evaluations work well for most growing B2B SaaS products and help teams review new features, track usability debt, and sustain optimization. Teams that launch major features or see spikes in usability-related support tickets should run focused evaluations more often. Treat heuristic evaluation as a regular part of product development instead of a one-time project.