Key Takeaways for B2B SaaS Teams

- Heuristic analysis often introduces subjectivity, bias, and false positives or negatives that push teams toward low-impact fixes and wasted development time.

- Traditional reviews rarely connect with real user behavior or revenue metrics like CAC, LTV, and MRR, so critical conversion problems stay hidden.

- Most heuristic audits stop at surface-level UI issues and ignore deeper workflow and architecture problems inside complex SaaS products.

- Heuristic methods struggle to scale for AI-driven, data-heavy interfaces and depend heavily on expert evaluators that many smaller teams do not have.

- Pair heuristics with user testing, A/B experiments, and expert frameworks. Book a discovery call with SaaSHero for a revenue-focused CRO audit that consistently drives ARR growth.

7 Practical Limits of Heuristic Analysis in B2B SaaS UX

The limits below reflect the most common problems B2B SaaS teams face when they rely too heavily on heuristic evaluations.

1. Subjectivity and Evaluator Bias in Complex SaaS Flows

Heuristic evaluations remain highly subjective because different evaluators flag different issues based on their own experience and skill level. In B2B SaaS, this becomes painful when teams review dense dashboards or multi-step onboarding flows. A senior UX designer may call the screen overloaded, while a product manager who works with power users may see essential functionality. These disagreements create conflicting recommendations and push development teams toward subjective “improvements” that do not move behavior or revenue metrics.

2. No Direct Connection to Real User Behavior

Traditional heuristic analysis usually runs separate from product analytics and user research, so it cannot show how real customers move through the product. Heuristics act as broad guidelines and often miss context-specific usability problems without live user data. In B2B SaaS, this gap hides “dark funnel” issues inside trial-to-paid flows, feature adoption paths, and upgrade journeys that quietly increase churn and reduce expansion revenue.

3. False Positives, False Negatives, and Misplaced Effort

Heuristic evaluation frequently produces false positives and misses subtle but important issues. In B2B SaaS, false positives can push teams to “fix” elements that actually support conversion, such as detailed forms that qualify leads or dense dashboards that advanced users rely on. False negatives allow high-impact problems to remain, including unclear pricing, confusing upgrade paths, and friction in billing flows that directly limit ARR growth.

4. Shallow Focus on Interface Instead of System Design

Most heuristic reviews focus on UI-level problems and ignore deeper workflow or architectural issues that shape the real customer experience. This narrow focus misses structural problems like broken information architecture for multi-stakeholder buying committees, weak role and permission models, or clumsy integration workflows that frustrate customer success teams. These deeper gaps often influence LTV, expansion revenue, and long-term retention more than any single UI tweak.

5. Heavy Dependence on Scarce Expert Evaluators

Inconsistencies become especially risky in early-stage products when teams lack seasoned usability experts, even with several reviewers involved. Many B2B SaaS startups and scale-ups cannot access senior UX specialists who understand complex business software. When junior designers or generalists run heuristic audits, they often miss industry norms, misjudge advanced workflows, or simplify features that power users actually need.

6. Difficulty Scaling to AI-Driven and Dynamic Interfaces

Modern B2B SaaS products now include AI copilots, adaptive interfaces, and dense data visualizations that change in real time. Traditional heuristics struggle to keep pace with these patterns. Different AIs reviewing the same interface surfaced very different problems, with only 20% overlap between ChatGPT and Gemini, 38% unique to ChatGPT, and 42% unique to Gemini. This variability shows that even AI-assisted heuristic analysis still lacks consistency for modern, complex SaaS experiences.

7. No Built-In Link to Revenue Metrics

For B2B SaaS leaders, the biggest gap is the missing link between heuristic findings and revenue metrics like CAC, LTV, and MRR. AI-based heuristic UX evaluations reach only 50–75% accuracy, which falls short of the 95% reliability needed when revenue sits on the line. Without quantitative validation, teams cannot rank fixes by revenue impact or prove that changes improved trial-to-paid conversion, expansion, or retention.

| Issue Type | Heuristic Fix Time/Cost | Actual Conversion Impact | SaaSHero Fix |

|---|---|---|---|

| Form “Too Long” | 2 weeks, $8k dev | -15% lead quality | A/B test progressive disclosure |

| Dashboard “Cluttered” | 4 weeks, $15k dev | -8% user engagement | User testing reveals power user needs |

| Pricing “Unclear” | 1 week, $3k design | +22% trial-to-paid | Revenue-focused messaging optimization |

These issues compound when teams rely only on heuristic analysis to guide product decisions. Book a discovery call to see how SaaSHero’s multi-evaluator framework uncovers conversion opportunities that standard audits miss.

When Heuristics Work: Smart Mitigations for SaaS Teams

Heuristic analysis still delivers value when teams apply it with guardrails and pair it with stronger research methods. The key lies in using heuristics for fast diagnosis, then validating insights with real users and real numbers.

Multi-Evaluator Reviews for Balanced Insights

Adding more reviewers improves issue detection up to roughly five evaluators. The most efficient setup uses three or four evaluators with different backgrounds. This mix reduces individual bias by blending perspectives while keeping costs manageable. For B2B SaaS, include at least one evaluator with business software domain expertise, one with technical understanding, and one who represents end-user workflows.

Hybrid Testing That Connects UX to Revenue

Teams get the strongest results when they combine heuristic analysis with user testing and quantitative data. AI-assisted heuristic reviews reach only 50–75% accuracy, so human validation remains essential. Use heuristics for a quick first pass, then confirm or reject findings through user interviews, usability tests, A/B experiments, and behavioral analytics before shipping changes.

Strategic Timing for Heuristic Audits

Heuristic analysis works best during early ideation, pre-launch reviews, and competitive audits. It should not serve as the final gate for revenue-critical changes. Treat heuristic findings as hypothesis starters that highlight obvious usability problems and potential friction points. Then move those hypotheses into structured testing and measurement before committing development resources.

| Method | Best Use Case | SaaS Stage | Time-to-Insight |

|---|---|---|---|

| Heuristic Analysis | Initial audit, competitive review | Pre-launch, major redesigns | 1-2 weeks |

| User Testing | Validation, behavior insights | Growth stage optimization | 3-4 weeks |

| A/B Testing | Revenue impact measurement | Scale-up, mature products | 4-8 weeks |

| Hybrid Approach | Comprehensive optimization | All stages | 2-6 weeks |

Book a discovery call to see how SaaSHero blends these methods into a revenue-focused optimization program with clear, measurable outcomes.

SaaSHero’s Revenue-First Heuristic CRO Framework

SaaSHero uses a structured, expert-led heuristic process to find “conversion killers” without waiting weeks for traffic data. Three independent evaluators review your product against seven usability principles, including relevance, clarity, trust, and friction, then deliver a prioritized roadmap of quick wins and high-impact tests.

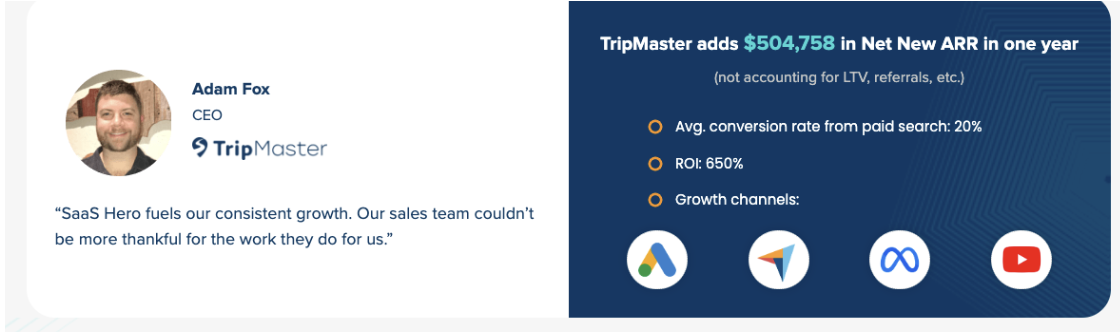

This framework underpins results such as 650% ROI and $504,758 in net new ARR for clients like TripMaster. Book a discovery call to see how a heuristic CRO audit can uncover revenue opportunities inside your own product.

Frequently Asked Questions About Heuristic Analysis in SaaS

What are the main limitations of heuristic evaluation?

Key limitations include evaluator subjectivity and bias, lack of real user data, false positives and negatives, shallow analysis scope, dependence on evaluator expertise, difficulty with modern interfaces, and no built-in link to revenue metrics. In B2B SaaS, these gaps often waste development resources and hide meaningful revenue opportunities.

How does heuristic analysis compare to user testing?

Heuristic analysis delivers faster, lower-cost initial insights but does not capture real behavior the way user testing does. Heuristics highlight obvious usability violations, while user testing reveals how people actually feel, think, and act as they complete tasks. The strongest approach uses heuristics to spot issues quickly, then relies on user testing to validate and prioritize fixes that affect conversion and satisfaction.

How many evaluators should run a heuristic audit?

Research shows that three to five evaluators usually provide the best balance between coverage and cost. Fewer than three evaluators increase the chance of missing important issues. More than five often adds cost without meaningful gains. The most important factor is diversity of expertise and relevant domain knowledge for your product.

What are Nielsen heuristic limitations for SaaS products?

Nielsen’s original ten heuristics focus on general web interfaces and do not fully cover B2B SaaS realities. They overlook multi-stakeholder buying processes, complex data workflows, role-based permissions, integration-heavy environments, and subscription business model dynamics. Modern SaaS teams benefit from specialized frameworks that reflect these unique patterns.

Can AI remove heuristic evaluation bias in 2026?

AI-assisted heuristic tools help, but they still show major gaps. Different AI systems often highlight different issues when they review the same interface. AI can broaden perspective and speed up reviews, yet human experts remain essential for context, prioritization, and revenue-focused decision making.

Conclusion and Next Steps for B2B SaaS Teams

B2B SaaS teams that understand the limits of heuristic analysis make smarter product and growth decisions. Heuristic reviews provide a fast starting point, but subjectivity, missing user data, and weak revenue ties mean they must sit inside a broader research and testing strategy.

SaaSHero’s heuristic analysis combines expert evaluation with a revenue-first lens, supporting outcomes like 650% ROI and $504k in net new ARR for clients such as TripMaster. Book a discovery call today to turn usability findings into measurable ARR growth for your product.