Key Takeaways

- B2B SaaS A/B tests rely on chi-squared for conversions and t-tests for revenue because of low traffic and long sales cycles.

- Sequential testing reaches significance with about 500 visitors per variation, avoiding traditional requirements of 1,000 to 1,500 visitors.

- The 7-step framework covers hypothesis definition, sample size, execution, analysis, pitfall control, tools QA, and business impact.

- Bayesian and multi-armed bandit methods speed up results for low-traffic sites and shorten test duration significantly.

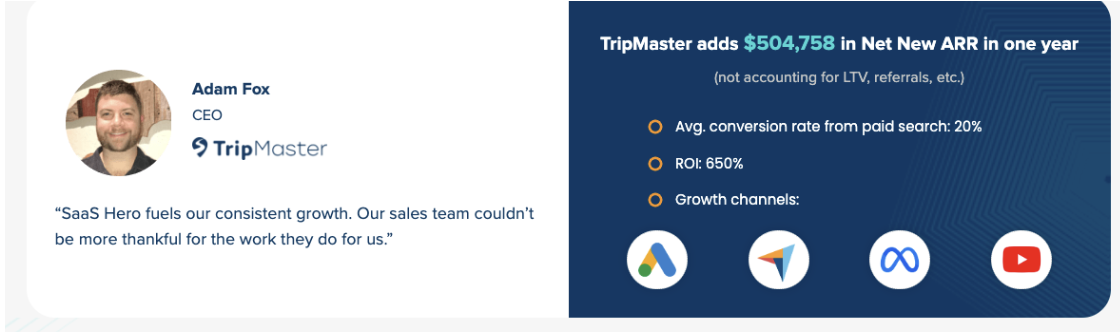

- TripMaster gained $504k ARR through disciplined testing, and you can schedule a discovery call with SaaSHero to apply the same approach.

Core Setup for B2B SaaS A/B Testing

Set up your tools before you touch any calculations. Use Google Sheets or Excel for statistical formulas, a testing platform like Statsig for experiment management, and CRM integration with HubSpot or Salesforce to track revenue beyond simple conversion rates.

B2B SaaS testing differs from e-commerce because sales cycles are longer, stakeholders are multiple, and attribution is complex. Sequential testing methods need at least 500 visitors per variation for reliable results, while traditional methods often need 1,000 to 1,500. The standard confidence level stays at 95% (p<0.05). B2B tests must also respect weekly business cycles and avoid “peeking,” which inflates Type I errors when teams check results too early.

7-Step Framework for B2B SaaS Test Significance

This 7-step process addresses the main challenges of B2B SaaS testing. The steps are: 1) Hypothesis and metrics definition, 2) Sample size calculation, 3) Test execution, 4) Chi-squared analysis for conversion rates, 5) T-test analysis for revenue metrics, 6) Pitfall identification and adjustment, and 7) Result interpretation and scaling.

Experiments that affect SQLs and pipeline usually need 4 to 8 weeks to reach significance. This longer window reflects the B2B buyer journey and helps you capture real behavior shifts instead of random noise.

|

Test Type |

Primary Metric |

Statistical Method |

Min Sample Size (Low Traffic) |

|

Landing Page Conversion |

Conversion Rate |

Chi-Squared Test |

500 per variation (Sequential) |

|

Demo Request Flow |

ARPU/MRR Impact |

T-Test |

200-500 per variation (Bayesian Alternative) |

|

Pricing Page |

Trial-to-Paid Rate |

Chi-Squared Test |

500 per variation (Sequential) |

Step 1: Define Revenue-Focused Hypotheses

Start with a clear hypothesis that connects directly to revenue. Replace vague goals like “improve engagement” with specific outcomes such as “Changing the CTA from ‘Learn More’ to ‘Get Demo’ will increase demo request rate by 20% and add $X in monthly ARR.” This direct revenue link secures stakeholder support and clarifies business impact.

Choose one primary metric such as conversion rate or trial signup rate, then add secondary metrics like ARPU, time-to-close, and customer quality. Shop Boss, for example, achieved a 305% conversion lift by focusing on demo request optimization instead of vanity metrics like page views or time on site.

Step 2: Calculate Sample Size and Power

Use this formula for required sample size: n = (Zα/2 + Zβ)² × (p1(1 – p1) + p2(1 – p2)) / (p2 – p1)².

Here Zα/2 equals 1.96 for 95% confidence, Zβ equals 0.84 for 80% power, p1 is the baseline conversion rate, and p2 is the expected improved rate. Low-traffic sites need larger relative uplifts, often a minimum detectable effect of 20 to 30 percent, to reach significance in a reasonable time.

B2B SaaS teams with limited traffic should use calculators that support sequential testing and Bayesian options. These tools show whether your traffic can sustain classic tests or if you should switch to alternative methods.

Step 3: Run Chi-Squared Tests for Conversion Rates

Use the chi-squared test to compare conversion rates between variations. The formula is χ² = Σ(Observed – Expected)² / Expected.

Build a 2×2 table with your data:

|

Converted |

Did Not Convert |

Total |

|

|

Control |

24 |

176 |

200 |

|

Variation |

30 |

170 |

200 |

|

Total |

54 |

346 |

400 |

Calculate expected values for each cell and then apply the chi-squared formula. If your χ² value is greater than 3.84 at 95% confidence, the result is statistically significant. Playvox used this type of testing on ad variations and achieved a 10x reduction in cost per lead.

Step 4: Use T-Tests for ARPU and MRR

Use a two-sample t-test when you test revenue impact. The formula is t = (x̄1 – x̄2) / √(s²(1/n1 + 1/n2)).

In this formula x̄1 and x̄2 are mean ARPU values for each group, s² is the pooled variance, and n1 and n2 are sample sizes. This method confirms whether conversion gains actually increase revenue. A 15% jump in demo requests has little value if those leads close at lower rates or bring in weaker ARPU.

TripMaster applied this approach and confirmed that their conversion lift produced $504,758 in Net New ARR instead of just more unqualified leads.

Step 5: Control B2B-Specific Testing Pitfalls

B2B SaaS tests face risks that can quietly break your results. Peeking at results before you reach significance raises Type I error rates. Use A/B tools with sequential testing guardrails to reduce premature calls.

Respect weekly business cycles by running tests for full weeks. B2B behavior often shifts between weekdays and weekends, so partial weeks can distort outcomes. Segment results by traffic source and customer type, because organic visitors usually behave differently from paid traffic in B2B funnels.

If attribution and setup feel overwhelming, book a discovery call with SaaSHero to build testing infrastructure that supports long sales cycles and multi-touch attribution.

Step 6: Validate Data with Tools and QA

Track events correctly in Google Analytics 4, your CRM, and your A/B testing platform. Confirm that your manual calculations align with platform p-values, and verify that each test meets sample size requirements before you declare a winner.

Combine a platform like Statsig for experiment management with independent significance calculators. This double-check approach protects you from platform bugs and misconfigured experiments.

Step 7: Translate Results into Business Impact

Statistical significance alone does not guarantee business value. A p-value below 0.05 shows that results are unlikely due to chance, but you still need to measure practical impact. Use customer lifetime value and funnel metrics to convert improvements into dollar terms.

For instance, a 10% lift in demo requests with p<0.01 might barely move revenue if your demo-to-close rate is 2% and ARPU is $100 per month. Prioritize tests that combine strong significance with meaningful financial outcomes, like Playvox’s 10x cost-per-lead reduction or Shop Boss’s 305% conversion increase.

Revenue-Focused Measurement and Validation

Success metrics should cover both statistics and revenue impact. Companies that run statistically sound A/B tests grow revenue 1.5 to 2 times faster and often see conversion lifts up to 49%.

Track results through your CRM so you measure immediate conversions and downstream effects like customer quality, retention, and actual revenue. TestGorilla reached an 80-day payback period by tying ad spend to closed revenue through disciplined testing, which supported their $70M Series A raise.

Advanced Methods for Low-Traffic B2B SaaS

Bayesian methods often need only 500 visitors per variation and can cut test duration from 108 days to about 33. These methods fit B2B SaaS teams that lack traffic and cannot wait months for frequentist significance.

Sequential testing and multi-armed bandit approaches add more options for continuous improvement. Multi-armed bandit tests can run with about 250 visitors for the weakest variation, which suits fast iteration cycles in growth-stage SaaS companies.

If you want support with these advanced methods, book a discovery call to see how SaaSHero can run testing programs that drive ARR growth similar to the $504k TripMaster case.

Summary: Turning Significance into ARR

Effective B2B SaaS A/B testing uses chi-squared tests for conversion rates, t-tests for revenue metrics, and Bayesian methods for low-traffic situations. Follow the 7-step framework by defining revenue-focused hypotheses, sizing samples correctly, running tests for the right duration, applying the correct statistical methods, avoiding common pitfalls, validating with multiple tools, and tying results back to business impact.

Real success comes from connecting statistical rigor to revenue growth so your testing program produces ARR gains instead of vanity wins. Companies like TripMaster and TestGorilla show how this approach can unlock transformational, data-driven growth.

Frequently Asked Questions

Where can I find a free A/B test significance calculator for B2B SaaS?

You can find Google Sheets-based significance calculators online that are tailored to B2B SaaS. These tools include chi-squared calculations, t-test formulas for revenue metrics, sample size calculators for low-traffic situations, and Bayesian alternatives. Many also include templates for multi-touch attribution and for linking test results to downstream revenue.

What confidence level should I use for B2B SaaS A/B tests?

Most B2B SaaS teams use 95% confidence (p<0.05), which balances rigor and speed. This level means you accept a 5% chance that results are due to random variation. Some teams use 90% confidence for faster iteration, but that choice raises the risk of false positives. For high-stakes changes such as pricing or core product features, 99% confidence can be more appropriate. Stay consistent across tests and explain these thresholds clearly to stakeholders.

What is the minimum sample size for low-traffic B2B A/B tests?

Traditional frequentist tests usually need at least 500 visitors per variation for reliable results, which can take months for low-traffic sites. Sequential testing can work with similar sample sizes but may finish earlier when significance appears. Bayesian methods are more flexible and often need only 500 visitors per variation while cutting test duration from more than 100 days to about 33. Multi-armed bandit tests can run with as few as 250 visitors for the weakest variation and support ongoing optimization.

How do I manage significance with long B2B sales cycles?

Long sales cycles require layered measurement. First, test leading indicators such as demo requests, trial signups, or qualified leads that appear within your test window. Use these metrics for immediate statistical checks and track revenue impact separately through your CRM. Run tests for at least 4 to 8 weeks to cover full weekly cycles, and avoid decisions based on partial sales data. Use cohort analysis to see how each variation performs over time after you reach significance on early indicators.

What is the difference between statistical and business significance?

Statistical significance (p<0.05) shows that a result is unlikely due to chance, while business significance shows whether the change matters financially. A 5% conversion lift might be statistically valid but add only $500 in monthly revenue for a company with $1M ARR. Estimate business significance by multiplying conversion gains by customer lifetime value and subtracting implementation and opportunity costs. Focus on tests that deliver both statistical significance and meaningful impact, such as 15 to 20 percent conversion lifts or at least $50 monthly ARPU gains that justify the effort.